Series

- Part 1: Install and Configure Caffe on windows 10

- Part 2: Install and Configure Caffe on ubuntu 16.04

Guide

requirements:

- NVIDIA driver 396.54

CUDA 8.0 + cudnn 6.0.21- CUDA 9.2 +cudnn 7.1.4

- opencv 3.1.0 —>3.3.0

- python 2.7 + numpy 1.15.1

python 3.5.2 + numpy 1.16.2- protobuf 3.6.1 (static)

- caffe latest

默认的protobuf,2.6.1测试通过。

此处,使用最新的3.6.1 也可以,编译caffe需要加上-std=c++11

install CUDA + cudnn

see install and configure cuda 9.2 with cudnn 7.1 on ubuntu 16.04

tips: we need to recompile caffe with cudnn 7.1

before we compile caffe, move caffe/python/caffe/selective_search_ijcv_with_python folder outside caffe source folder, otherwise error occurs.

install protobuf

1 | which protoc |

caffe使用static的libprotoc 3.6.1

install opencv

1 | which opencv_version |

python

1 | python --version |

check numpy version

1 | import numpy |

compile caffe

clone repo

1 | git clone https://github.com/BVLC/caffe.git |

update repo

update at 20180822.

if you change your local Makefile and git pull origin master merge conflict, solution

1 | git checkout HEAD Makefile |

configure

1 | mkdir build && cd build && cmake-gui .. |

cmake-gui options

USE_CUDNN ON

USE_OPENCV ON

Build_python ON

Build_python_layer ON

BLAS atlas

CMAKE_CXX_FLGAS -std=c++11

CMAKE_INSTALL_PREFIX /home/kezunlin/program/caffe/build/install

使用

-std=c++11

configure output

Dependencies:

BLAS : Yes (Atlas)

Boost : Yes (ver. 1.66)

glog : Yes

gflags : Yes

protobuf : Yes (ver. 3.6.1)

lmdb : Yes (ver. 0.9.17)

LevelDB : Yes (ver. 1.18)

Snappy : Yes (ver. 1.1.3)

OpenCV : Yes (ver. 3.1.0)

CUDA : Yes (ver. 9.2)

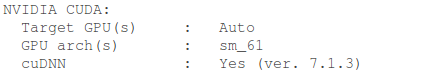

NVIDIA CUDA:

Target GPU(s) : Auto

GPU arch(s) : sm_61

cuDNN : Yes (ver. 7.1.4)

Python:

Interpreter : /usr/bin/python2.7 (ver. 2.7.12)

Libraries : /usr/lib/x86_64-linux-gnu/libpython2.7.so (ver 2.7.12)

NumPy : /usr/lib/python2.7/dist-packages/numpy/core/include (ver 1.51.1)

Documentaion:

Doxygen : /usr/bin/doxygen (1.8.11)

config_file : /home/kezunlin/program/caffe/.Doxyfile

Install:

Install path : /home/kezunlin/program/caffe/build/install

Configuring done

we can also use

python3.5andnumpy 1.16.2

Python:

Interpreter : /usr/bin/python3 (ver. 3.5.2)

Libraries : /usr/lib/x86_64-linux-gnu/libpython3.5m.so (ver 3.5.2)

NumPy : /home/kezunlin/.local/lib/python3.5/site-packages/numpy/core/include (ver 1.16.2)

use -std=c++11, otherwise errors occur

make -j8

[ 1%] Running C++/Python protocol buffer compiler on /home/kezunlin/program/caffe/src/caffe/proto/caffe.proto

Scanning dependencies of target caffeproto

[ 1%] Building CXX object src/caffe/CMakeFiles/caffeproto.dir/__/__/include/caffe/proto/caffe.pb.cc.o

In file included from /usr/include/c++/5/mutex:35:0,

from /usr/local/include/google/protobuf/stubs/mutex.h:33,

from /usr/local/include/google/protobuf/stubs/common.h:52,

from /home/kezunlin/program/caffe/build/include/caffe/proto/caffe.pb.h:9,

from /home/kezunlin/program/caffe/build/include/caffe/proto/caffe.pb.cc:4:

/usr/include/c++/5/bits/c++0x_warning.h:32:2: error: #error This file requires compiler and library support for the ISO C++ 2011 standard. This support must be enabled with the -std=c++11 or -std=gnu++11 compiler options.

#error This file requires compiler and library support \

fix gcc error

edit /usr/local/cuda/include/host_config.h

将其中的第115行注释掉:

1 |

|

fix gflags error

- caffe/include/caffe/common.hpp

- caffe/examples/mnist/convert_mnist_data.cpp

Comment out the ifndef

1 | // #ifndef GFLAGS_GFLAGS_H_ |

compile

1 | make clean |

output

[ 1%] Running C++/Python protocol buffer compiler on /home/kezunlin/program/caffe/src/caffe/proto/caffe.proto

Scanning dependencies of target caffeproto

[ 1%] Building CXX object src/caffe/CMakeFiles/caffeproto.dir/__/__/include/caffe/proto/caffe.pb.cc.o

[ 1%] Linking CXX static library ../../lib/libcaffeproto.a

[ 1%] Built target caffeproto

libcaffeproto.astatic library

install

1 | make install |

install to

build/installfolder

1 | ls build/install/lib |

advanced

- INTERFACE_INCLUDE_DIRECTORIES

- INTERFACE_LINK_LIBRARIES

Target “caffe” has an INTERFACE_LINK_LIBRARIES property which differs from its LINK_INTERFACE_LIBRARIES properties.

Play with Caffe

python caffe

fix python caffe

fix ipython 6.1 version conflict

vim caffe/python/requirements.txt

1 | ipython>=3.0.0 |

reinstall ipython

1 | pip install -r requirements.txt |

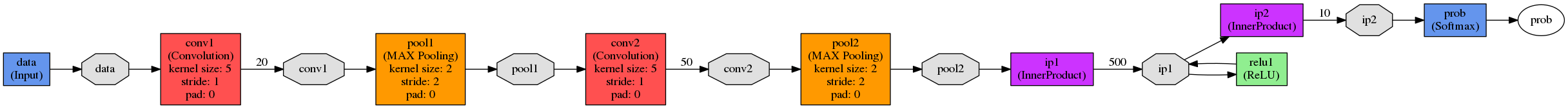

python draw net

1 | sudo apt-get install graphviz |

we need to install graphviz, otherwise we get ERROR:”dot” not found in path

draw net

1 | cd $CAFFE_HOME |

cpp caffe

train net

1 | cd caffe |

output results

I0912 15:57:28.812655 14094 solver.cpp:327] Iteration 10000, loss = 0.00272129

I0912 15:57:28.812675 14094 solver.cpp:347] Iteration 10000, Testing net (#0)

I0912 15:57:28.891481 14100 data_layer.cpp:73] Restarting data prefetching from start.

I0912 15:57:28.893678 14094 solver.cpp:414] Test net output #0: accuracy = 0.9904

I0912 15:57:28.893707 14094 solver.cpp:414] Test net output #1: loss = 0.0276084 (* 1 = 0.0276084 loss)

I0912 15:57:28.893714 14094 solver.cpp:332] Optimization Done.

I0912 15:57:28.893719 14094 caffe.cpp:250] Optimization Done.

tips, for

caffe, errors because no imdb data.

I0417 13:28:17.764714 35030 layer_factory.hpp:77] Creating layer mnist

F0417 13:28:17.765067 35030 db_lmdb.hpp:15] Check failed: mdb_status == 0 (2 vs. 0) No such file or directory

---------------------

upgrade net

1 | ./tools/upgrade_net_proto_text old.prototxt new.prototxt |

caffe time

yolov3

1 | ./build/tools/caffe time --model='det/yolov3/yolov3.prototxt' --iterations=100 --gpu=0 |

yolov3 5 class

1 | ./build/tools/caffe time --model='det/autotrain/yolo3-5c.prototxt' --iterations=100 --gpu=0 |

Example

Caffe Classifier

1 |

|

CMakeLists.txt

1 | find_package(OpenCV REQUIRED) |

run

1 | ./demo |

if error occurs:

libcaffe.so.1.0.0 => not found

edit .bashrc

1 | # for caffe |

Reference

History

- 20180807: created.

- 20180822: update cmake-gui for caffe